CS 333: Safe and Interactive Robotics

Fall 2018-2019, Class: Tue, Thu 1:30-2:50pm, School of Education 334

Description:

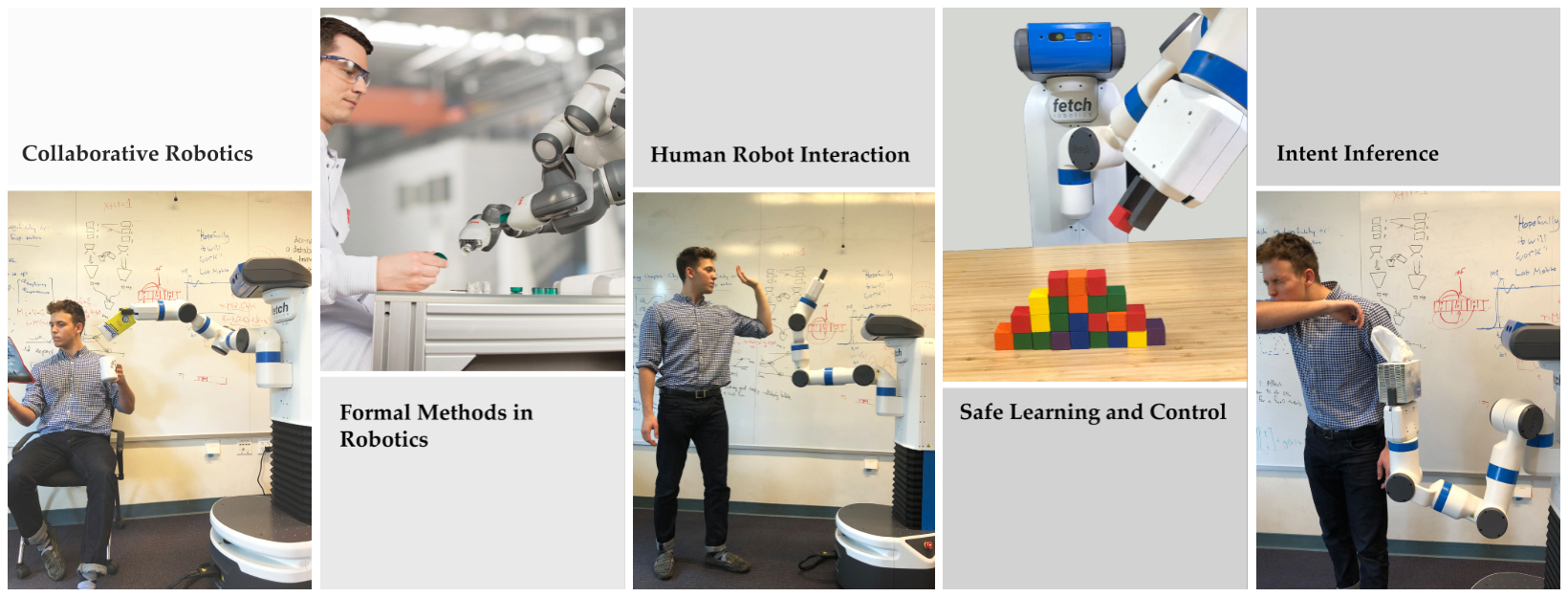

As the field of robotics is quickly emerging, one critical and challenging subject is ensuring that robotic systems can work safely with humans. This course covers a diverse set of topics that focus on addressing the most critical aspects of building interactive and safe autonomous systems. Students will practice essential research skills including critiquing papers, debating, reviewing, writing project proposals, and presenting ideas effectively.

Format:

The course is a combination of lecture and reading sessions. The lectures discuss the fundamentals of topics required for modeling and design of safe and interactive autonomy for human-robot systems. During the reading sessions, students present and discuss recent contributions in this area. Throughout the semester, each student works on a related research project that they present at the end of the semester. See detailed course policies.

Prerequisites:

Introductory courses in artificial intelligence and robotics are recommended, but not required.

Learning Objectives:

At the end of this course you will have gained knowledge about applications of various topics in designing safe and interactive autonomous systems.

You will also have hands-on experience working on a research project and it is expected that you will gain the following research skills: analyzing literature related to a particular topic, critiquing papers, and presentation of research ideas.

Staff

Timeline

| Date | Lecture | Handouts / Deadlines | Notes |

|---|---|---|---|

| Week 1 Tue, Sep 25 |

Lecture Introduction to Safe and Interactive Robotics |

| Sample Long Review |

| Week 1 Thu, Sep 27 |

Lecture Motion Planning |

|

|

| Week 2 Tue, Oct 02 |

Lecture Trajectory Optimization | ||

| Week 2 Thu, Oct 04 |

Lecture Optimal Control and Reinforcement Learning |

|

|

| Week 3 Tue, Oct 9 |

Reading Task and Motion Planning |

|

P1 Pros: Erdem P1 Cons: Shushman P2 Pros: Shushman P2 Cons: Erdem |

| Week 3 Thu, Oct 11 |

Lecture Learning from Demonstration |

|

|

| Week 4 Tue, Oct 16 |

Lecture Learning from Demonstration |

|

|

| Week 4 Thu, Oct 18 |

Reading LfD + Preference based Learning |

Due Project Proposal Reports

|

P1 Pros: David P1 Cons: Minae P2 Pros: Nick P2 Cons: Vidush |

| Week 5 Tue, Oct 23 |

Presentation | Project Proposal Presentations | |

| Week 5 Thu, Oct 25 |

Reading Safe Learning |

|

P1 Pros: Anand P1 Cons: Erdem P2 Pros: Mengxi P2 Cons: Xieyuan |

| Week 6 Tue, Oct 30 |

Lecture Guest Lecture | Bradford Neuman, Anki

|

|

| Week 6 Thu, Nov 01 |

Lecture Guest Lecture | Roberto Martin-Martin, Stanford

|

|

| Week 7 Tue, Nov 06 |

Reading Intent Inference |

|

P1: Masha P2: Nikhil P3: Qizhan P4: Zheqing |

| Week 7 Thu, Nov 08 |

Reading Shared Control |

|

P1: Minae P2: Ashley P3: Arthur P4: Zixuan |

| Week 8 Tue, Nov 13 |

Reading Communication and Coordination |

|

P1 Pros: Kyle P1 Cons: Nick P2 Pros: Andy P2 Cons: Mengxi |

| Week 8 Thu, Nov 15 |

Reading Collaboration | Due Project Milestone Reviews Due

|

P1 Pros: Haoze P1 Cons: Peter P2 Pros: Sean P2 Cons: David |

| Week 9 Tue, Nov 20 |

Thanksgiving Break | ||

| Week 9 Thu, Nov 22 | Thanksgiving Break | ||

| Week 10 Tue, Nov 27 |

Lecture Formal Methods in Robotics |

|

|

| Week 10 Thu, Nov 29 |

Presentation | Project Presentation | |

| Week 11 Tue, Dec 04 |

Presentation | Project Presentation | |

| Week 11 Thu, Dec 06 |

Lecture Guest Lecture | Katherine Driggs-Campbell, Stanford | |

| Week 12 Tue, Dec 11 |

Project Reports | Due Deadline at midnight (Firm) |

Grading Metrics

| Component | Contribution to Grade |

|---|---|

| Final Project | 50% |

| Student Presentations & Paper Reviews | 40% |

| Pop-quizzes & Class Participation | 10% |

| Total | 100% |

Project Grading

| Component | Contribution to Grade |

|---|---|

| Project Proposal Reports | 5% |

| Project Proposal Presentations | 5% |

| Project Milestone Reviews | 10% |

| Project Presentation (Possibly with Demo) | 10% |

| Final Project Report | 20% |

| Total | 50% |

Grading Policies

Final Project (50%): Each student is required to work individually or in groups of up to three people on a research project. The project requires a 2-page proposal including the relevant literature survey, a proposal presentation, a 2-page milestone review, a 6-8 page final report in (double-column IEEE format), and a final presentation/demo. All the page limits exclude references. Students who are taking the class for 4 units are required to work individually on their projects.

Student Presentation & Paper Reviews (40%): All students will get a chance to present multiple papers throughout the class during the reading days. Each paper will have two presenters each discussing the pros or cons of the paper. The presenters need to send the reviews (conference style) of their reading assignments by the midnight before the day of the class. The presentation grade is based on how well the material is presented in both the written review and the talk, how well it is connected to the rest of the papers or class, and how prepared the student is in answering questions from the class.

Every other student who will not be presenting is still required to write a short review of the two papers presented in reading days by noon on the day the paper is presented. The short reviews should just be a couple of sentences summarizing each of the two papers.

Pop-quizzes & Class Participation (10%): There are a few short pop quizzes throughout the quarter on lecture material and some of the paper readings. All students should participate in the discussions on each paper during the reading days.

Project Instructions

The research project throughout the class should study a new research problem, i.e., design a new algorithm, study a new application, etc. Literature surveys are not acceptable. The main deliverables of the project are:

Project Proposal Reports (5%): A 2-page proposal that has identified the problem definition, a literature survey on the problem, a potential solution, and a timeline.

Project Proposal Presentation (5%): A short presentation discussing the proposal, limitations, and challenges.

Project Milestone Reviews (10%): A 1-2 page writeup that goes through the progress so far, if there needs to be any changes to the goals, and the updated timeline. You should schedule a meeting with me or the CA to go over the milestone reviews.

Project Presentation, Possibly with Demo (10%): A short (~15-min) presentation reporting the final findings of the project.

Final Project Report (20%): A 6-8 page project report (in double column IEEE format).

This class is partially based on the following existing courses:

Algorithmic Human-Robot Interaction (Berkeley)

Cooperative Machines (MIT)

Computer-Aided Verification (Berkeley)

Human-Robot Interaction (Georgia Tech)

© Dorsa Sadigh 2018